You’ve built your first ROS 2 package. Your nodes communicate, your services respond, and your launch files start everything with a single command. But you’re controlling virtual robots that don’t exist. It’s time to bring your software into the real world—simulated reality.

Gazebo is the industry-standard robot simulator for ROS. It provides realistic physics, sensor simulation, and 3D visualization. With Gazebo, you can test your algorithms on virtual robots before ever touching hardware—saving thousands of dollars in development costs and months of debugging time.

In this guide, you’ll learn to simulate robots from scratch, add sensors, spawn them in Gazebo, and control them using your existing ROS 2 nodes.

Prerequisites: This guide assumes you have ROS 2 Humble installed, your first ROS package built, and basic familiarity with ROS 2 concepts. If you’re new to ROS, start with our ROS Installation Guide and Building Your First ROS Package.

What Is Gazebo and Why Simulate?

Gazebo is an open-source robot simulator that provides:

- Realistic Physics: Rigid body dynamics, collisions, friction, gravity

- Sensor Simulation: Cameras, LIDAR, IMU, GPS, contact sensors

- 3D Visualization: Realistic rendering with lighting and shadows

- Environment Modeling: Custom worlds, obstacles, buildings

- Plugin System: Extend functionality for custom hardware

Why Simulate Before Buying Hardware?

| Aspect | Simulation | Real Hardware |

|---|---|---|

| Cost | Free | $500 – $50,000+ |

| Setup Time | Minutes to hours | Days to weeks |

| Risk | Zero (can’t break virtual robot) | Hardware damage possible |

| Iteration Speed | Fast (instant resets) | Slow (physical setup) |

| Realism | Approximation of physics | True physics |

| Sensors | Perfect sensors (configurable noise) | Real sensor noise and drift |

| Debugging | Easy (pause, inspect, replay) | Challenging |

Best Practice: Develop and test in simulation first. When your algorithms work reliably in Gazebo, then move to real hardware. This workflow saves time, money, and frustration.

Step 1: Install Gazebo with ROS 2

ROS 2 Humble includes Gazebo support through the ros-gazebo-ros-pkgs meta-package.

Install Gazebo Packages

- Update Package Lists:

sudo apt update - Install Gazebo and ROS Integration:

sudo apt install ros-humble-gazebo-ros-pkgs ros-humble-gazebo-ros2-control -y - Install Gazebo (Standalone):

sudo apt install gazebo -y - Install Gazebo Plugins:

sudo apt install ros-humble-robot-state-publisher ros-humble-joint-state-publisher -y - Verify Installation:

gazebo --version # Should output: gazebo version X.X.X

Launch Gazebo from ROS 2

- Source ROS 2:

source /opt/ros/humble/setup.bash - Launch Empty Gazebo World:

ros2 launch gazebo_ros gazebo.launch.py - Expected Output:

[INFO] [gazebo]: Starting gazebo ros2 node... [INFO] [gazebo]: RosGazeboInterface is trying to spawn model [robot] - Verify Gazebo Window Opens: A 3D window should appear with an empty world. Click around to orbit, zoom, and pan.

Step 2: Understanding Robot Description (URDF)

Before simulating a robot, you must describe it mathematically. The Unified Robot Description Format (URDF) is an XML format for representing robot models.

URDF Basics: The Robot Model

URDF describes robots as a tree of links (rigid bodies) connected by joints (movable connections).

- Links: Physical components with mass, inertia, visual geometry, collision geometry

- Joints: Connections between links with type (revolute, prismatic, continuous, fixed), axis, and limits

- Transmission: Mapping from actuators to joints

- Joint Limits: Position, velocity, and effort limits

Create a Simple Robot Description Package

- Create Package:

cd ~/ros2_ws/src

ros2 pkg create robot_description --build-type ament_python --dependencies robot_state_publisher - Create URDF Directory:

mkdir -p ~/ros2_ws/src/robot_description/urdf

mkdir -p ~/ros2_ws/src/robot_description/meshes - Create Your First URDF File:

nano ~/ros2_ws/src/robot_description/urdf/rrbot.urdf - Add Basic Two-Link Robot:

<?xml version="1.0"?>

<robot name="rrbot">

<!-- Base Link -->

<link name="base_link">

<visual>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<geometry>

<box size="0.1 0.1 0.1"/>

</geometry>

<material name="blue">

<color rgba="0.1 0.1 0.8 1.0"/>

</material>

</visual>

<collision>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<geometry>

<box size="0.1 0.1 0.1"/>

</geometry>

</collision>

<inertial>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<mass value="1.0"/>

<inertia ixx="0.001" ixy="0" ixz="0" iyy="0.001" iyz="0" izz="0.001"/>

</inertial>

</link>

<!-- Joint 1 -->

<joint name="joint1" type="continuous">

<parent link="base_link"/>

<child link="link1"/>

<origin xyz="0 0 0.1" rpy="0 0 0"/>

<axis xyz="0 0 1"/>

<dynamics damping="0.05" friction="0.1"/>

</joint>

<!-- Link 1 -->

<link name="link1">

<visual>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<geometry>

<box size="0.05 0.05 0.2"/>

</geometry>

<material name="grey">

<color rgba="0.5 0.5 0.5 1.0"/>

</material>

</visual>

<collision>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<geometry>

<box size="0.05 0.05 0.2"/>

</geometry>

</collision>

<inertial>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<mass value="0.5"/>

<inertia ixx="0.0005" ixy="0" ixz="0" iyy="0.0005" iyz="0" izz="0.0001"/>

</inertial>

</link>

<!-- Joint 2 -->

<joint name="joint2" type="continuous">

<parent link="link1"/>

<child link="link2"/>

<origin xyz="0 0 0.2" rpy="0 0 0"/>

<axis xyz="0 1 0"/>

<dynamics damping="0.05" friction="0.1"/>

</joint>

<!-- Link 2 (End Effector) -->

<link name="link2">

<visual>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<geometry>

<box size="0.03 0.03 0.15"/>

</geometry>

<material name="red">

<color rgba="0.8 0.1 0.1 1.0"/>

</material>

</visual>

<collision>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<geometry>

<box size="0.03 0.03 0.15"/>

</geometry>

</collision>

<inertial>

<origin xyz="0 0 0.05" rpy="0 0 0"/>

<mass value="0.3"/>

<inertia ixx="0.0002" ixy="0" ixz="0" iyy="0.0002" iyz="0" izz="0.00005"/>

</inertial>

</link>

</robot>

URDF Component Reference

| Element | Description | Key Attributes |

|---|---|---|

| <robot> | Root element | name, xmlns |

| <link> | Rigid body | name |

| <joint> | Connection between links | name, type |

| <visual> | Visual representation | origin, geometry, material |

| <collision> | Collision geometry | origin, geometry |

| <inertial> | Physics properties | mass, inertia |

Joint Types Reference

| Type | Motion | Use Case |

|---|---|---|

| revolute | Rotates 0 to 2π | Limited rotation joints |

| continuous | Unlimited rotation | Wheels, free-spinning joints |

| prismatic | Linear slide | Pistons, linear stages |

| fixed | No motion | Mounting brackets, sensor mounts |

| floating | 6-DOF movement | Floating objects |

| planar | 2D planar motion | Objects on flat surfaces |

Step 3: Visualize Your Robot in RViz

Before spawning in Gazebo, verify your URDF looks correct in RViz.

- Create Launch File for Visualization:

mkdir -p ~/ros2_ws/src/robot_description/launch nano ~/ros2_ws/src/robot_description/launch/display.launch.py - Add RViz Launch Configuration:

"""display.launch.py - Launch RViz with robot description""" from launch import LaunchDescription from launch.actions import DeclareLaunchArgument from launch.substitutions import LaunchConfiguration from launch_ros.actions import Node import os from ament_index_python.packages import get_package_share_directory def generate_launch_description(): # URDF file path pkg_share = get_package_share_directory('robot_description') urdf_file = os.path.join(pkg_share, 'urdf', 'rrbot.urdf') # Robot State Publisher robot_state_publisher = Node( package='robot_state_publisher', executable='robot_state_publisher', name='robot_state_publisher', parameters=[{'robot_description': open(urdf_file).read()}], output='screen' ) # RViz Node rviz_node = Node( package='rviz2', executable='rviz2', name='rviz2', arguments=['-d', os.path.join(pkg_share, 'rviz', 'robot.rviz')], output='screen' ) return LaunchDescription([ robot_state_publisher, rviz_node ]) - Build the Package:

cd ~/ros2_ws colcon build --symlink-install source install/setup.bash - Launch RViz Visualization:

ros2 launch robot_description display.launch.py - Configure RViz:

- Click “Add” in left panel

- Select “RobotModel”

- In Fixed Frame, enter “base_link”

- You should see your robot displayed

Success Indicator: If you see a colorful robot model matching your URDF, your robot description is correct. If parts are missing or positioned wrong, check your link origins and parent-child relationships.

Step 4: Spawn Your Robot in Gazebo

Now let’s bring your robot into Gazebo’s physics simulation.

Method 1: Using gazebo_ros Spawner

- Launch Gazebo with Empty World:

ros2 launch gazebo_ros gazebo.launch.py - Spawn Robot Using spawn_entity Script:

ros2 run gazebo_ros spawn_entity.py \ -topic /robot_description \ -entity rrbot - Verify Robot Appears: You should see your robot model in the Gazebo window, falling and settling on the ground plane.

Method 2: Complete Launch File

- Create Gazebo Launch File:

nano ~/ros2_ws/src/robot_description/launch/gazebo.launch.py - Add Launch Configuration:

"""gazebo.launch.py - Launch Gazebo with robot""" from launch import LaunchDescription from launch.actions import ExecuteProcess from launch_ros.actions import Node import os from ament_index_python.packages import get_package_share_directory def generate_launch_description(): pkg_share = get_package_share_directory('robot_description') urdf_file = os.path.join(pkg_share, 'urdf', 'rrbot.urdf') # Launch Gazebo gazebo = ExecuteProcess( cmd=['gazebo', '--verbose', '-s', 'libgazebo_ros_factory.so'], output='screen' ) # Robot State Publisher robot_state_publisher = Node( package='robot_state_publisher', executable='robot_state_publisher', name='robot_state_publisher', parameters=[{'robot_description': open(urdf_file).read()}], output='screen' ) # Spawn Robot spawn_entity = Node( package='gazebo_ros', executable='spawn_entity.py', arguments=['-entity', 'rrbot', '-topic', '/robot_description'], output='screen' ) return LaunchDescription([ gazebo, robot_state_publisher, spawn_entity ])

Step 5: Control Your Robot in Gazebo

Now let’s control your simulated robot’s joints using ROS 2.

Install ros2_control

- Install ros2_control Packages:

sudo apt install ros-humble-ros2-control ros-humble-ros2-controller ros-humble-gazebo-ros2-control -y

Add Transmission to URDF

- Update URDF with Transmission:

nano ~/ros2_ws/src/robot_description/urdf/rrbot.urdf - Add Transmission Block (before closing </robot> tag):

<!-- Transmission for Joint 1 --> <transmission name="joint1_trans"> <type>transmission_interface/SimpleTransmission</type> <joint name="joint1"> <hardwareInterface>hardware_interface/EffortJointInterface</hardwareInterface> </joint> <actuator name="joint1_motor"> <hardwareInterface>hardware_interface/EffortJointInterface</hardwareInterface> <mechanicalReduction>1</mechanicalReduction> </actuator> </transmission> <!-- Transmission for Joint 2 --> <transmission name="joint2_trans"> <type>transmission_interface/SimpleTransmission</type> <joint name="joint2"> <hardwareInterface>hardware_interface/EffortJointInterface</hardwareInterface> </joint> <actuator name="joint2_motor"> <hardwareInterface>hardware_interface/EffortJointInterface</hardwareInterface> <mechanicalReduction>1</mechanicalReduction> </actuator> </transmission> <!-- Gazebo Configuration --> <gazebo> <plugin name="gazebo_ros2_control" filename="libgazebo_ros2_control.so"> <parameters>$(find robot_description)/config/rrbot_controllers.yaml</parameters> </plugin> </gazebo>

Create Controller Configuration

- Create Config Directory:

mkdir -p ~/ros2_ws/src/robot_description/config - Create Controller YAML:

nano ~/ros2_ws/src/robot_description/config/rrbot_controllers.yaml - Add Controller Configuration:

controller_manager: ros__parameters: update_rate: 100 joint_state_broadcaster: type: joint_state_broadcaster/JointStateBroadcaster joint_effort_controller: type: effort_controllers/JointGroupEffortController joint_state_broadcaster: ros__parameters: publish_rate: 50.0 joint_effort_controller: ros__parameters: joints: - joint1 - joint2 command_interface: effort state_interface: - position - velocity

Control Joints with Command-Line

- Load Controllers:

ros2 control load_controller --set-state active joint_state_broadcaster ros2 control load_controller --set-state active joint_effort_controller - List Controllers:

ros2 control list_controllers - Send Effort Commands:

ros2 topic pub /joint_effort_controller/commands std_msgs/msg/Float64MultiArray "{data: [0.5, 0.5]}" - Watch Robot Move: You should see your robot’s joints rotate in Gazebo.

Step 6: Add Sensors to Your Robot

Sensors bring your simulation to life. Let’s add a camera and LIDAR to see what the robot perceives.

Add a Camera Sensor

- Create Camera Link:

nano ~/ros2_ws/src/robot_description/urdf/rrbot_with_camera.urdf - Add Camera Link and Sensor:

<!-- Camera Link --> <link name="camera_link"> <visual> <origin xyz="0 0 0" rpy="0 0 0"/> <geometry> <box size="0.02 0.05 0.02"/> </geometry> <material name="black"> <color rgba="0 0 0 1.0"/> </material> </visual> <collision> <origin xyz="0 0 0" rpy="0 0 0"/> <geometry> <box size="0.02 0.05 0.02"/> </geometry> </collision> </link> <!-- Camera Joint --> <joint name="camera_joint" type="fixed"> <parent link="link2"/> <child link="camera_link"/> <origin xyz="0 0 0.1" rpy="0 0 0"/> </joint> <!-- Camera Sensor Plugin --> <gazebo reference="camera_link"> <sensor type="camera" name="camera_sensor"> <update_rate>30.0</update_rate> <camera name="front_camera"> <horizontal_fov>1.3962634</horizontal_fov> <image> <width>640</width> <height>480</height> <format>R8G8B8</format> </image> <clip> <near>0.02</near> <far>300</far> </clip> </camera> <plugin name="camera_controller" filename="libgazebo_ros_camera.so"> <frame_name>camera_link</frame_name> <image_topic_name>/camera/image_raw</image_topic_name> <camera_info_topic_name>/camera/camera_info</camera_info_topic_name> </plugin> </sensor> </gazebo>

Add a LIDAR Sensor

- Add LIDAR Link and Plugin:

<!-- LIDAR Link --> <link name="hokuyo_link"> <visual> <origin xyz="0 0 0" rpy="0 0 0"/> <geometry> <cylinder length="0.03" radius="0.02"/> </geometry> <material name="green"> <color rgba="0 1 0 1.0"/> </material> </visual> </link> <!-- LIDAR Joint --> <joint name="hokuyo_joint" type="fixed"> <parent link="base_link"/> <child link="hokuyo_link"/> <origin xyz="0 0 0.15" rpy="0 0 0"/> </joint> <!-- LIDAR Sensor Plugin --> <gazebo reference="hokuyo_link"> <sensor type="ray" name="hokuyo_sensor"> <pose>0 0 0 0 0 0</pose> <update_rate>40</update_rate> <ray> <scan> <horizontal> <samples>720</samples> <resolution>1</resolution> <min_angle>-2.356194</min_angle> <max_angle>2.356194</max_angle> </horizontal> </scan> <range> <min>0.10</min> <max>30.0</max> <resolution>0.01</resolution> </range> <noise> <type>gaussian</type> <mean>0.0</mean> <stddev>0.01</stddev> </noise> </ray> <plugin name="gazebo_ros_hokuyo" filename="libgazebo_ros_ray_sensor.so"> <ros> <namespace>/</namespace> <remapping>~/out:=scan</remapping> </ros> <output_type>sensor_msgs/LaserScan</output_type> </plugin> </sensor> </gazebo>

Visualize Sensor Data

- Echo LIDAR Scan:

ros2 topic echo /scan - View Camera in RViz:

ros2 run rqt_image_view rqt_image_view - Add LaserScan to RViz:

- Click “Add” > “LaserScan”

- Set Topic to “/scan”

- Set Size to 0.05

- You should see laser scan overlay

Step 7: Create Custom Gazebo Worlds

Empty worlds are boring. Let’s create interesting environments for your robots.

Create a Simple World File

- Create Worlds Directory:

mkdir -p ~/ros2_ws/src/robot_description/worlds - Create World File:

nano ~/ros2_ws/src/robot_description/worlds/warehouse.world - Define the World:

<?xml version="1.0" ?> <sdf version="1.6"> <world name="warehouse"> <!-- Physics Plugin --> <physics type="ode"> <max_step_size>0.001</max_step_size> <real_time_factor>1.0</real_time_factor> </physics> <!-- Scene --> <scene> <ambient>0.4 0.4 0.4 1</ambient> <shadows>true</shadows> </scene> <!-- Light --> <light type="directional" name="sun"> <cast_shadows>true</cast_shadows> <pose>0 0 10 0 0 0</pose> <diffuse>0.8 0.8 0.8 1</diffuse> <specular>0.1 0.1 0.1 1</specular> <attenuation>range 1000</attenuation> <direction>-0.5 0.5 -1</direction> </light> <!-- Ground Plane --> <地面 type="plane" name="floor"> <pose>0 0 -0.001 0 0 0</pose> <geometry> <plane> <size>100 100</size> </plane> </geometry> <material> <ambient>0.8 0.8 0.8 1</ambient> <diffuse>0.8 0.8 0.8 1</diffuse> <specular>0.1 0.1 0.1 1</specular> </material> </ground> <!-- Obstacles: Walls --> <model name="wall_north"> <pose>0 10 1 0 0 0</pose> <static>true</static> <link name="box"> <pose>0 0 0 0 0 0</pose> <collision name="collision"> <geometry> <box> <size>20 0.2 2</size> </box> </geometry> </collision> <visual name="visual"> <geometry> <box> <size>20 0.2 2</size> </box> </geometry> <material> <ambient>0.5 0.5 0.5 1</ambient> </material> </visual> </link> </model> <!-- Obstacle: Box --> <model name="obstacle_box"> <pose>2 -2 0.25 0 0 0</pose> <static>true</static> <link name="box"> <collision name="collision"> <geometry> <box> <size>0.5 0.5 0.5</size> </box> </geometry> </collision> <visual name="visual"> <geometry> <box> <size>0.5 0.5 0.5</size> </box> </geometry> <material> <ambient>1 0 0 1</ambient> </material> </visual> </link> </model> </world> </sdf>

Step 8: Simulate TurtleBot 3

TurtleBot 3 is the most popular educational robot. Let’s simulate it to learn mobile robot development.

- Install TurtleBot 3 Packages:

sudo apt install ros-humble-turtlebot3* -y - Set Robot Model:

echo "export TURTLEBOT3_MODEL=burger" >> ~/.bashrc source ~/.bashrc - Launch TurtleBot 3 Simulation:

ros2 launch turtlebot3_gazebo turtlebot3_world.launch.py - Verify Simulation: Gazebo should open with TurtleBot 3 in a world with obstacles.

Control TurtleBot 3

- Publish Velocity Commands:

ros2 topic pub /cmd_vel geometry_msgs/msg/Twist "{linear: {x: 0.2, y: 0.0, z: 0.0}, angular: {x: 0.0, y: 0.0, z: 0.0}}" - Teleop Control:

ros2 run turtlebot3_teleop teleop_keyboard - Launch SLAM for Mapping:

ros2 launch turtlebot3_SLAM slam.launch.py

Next Challenge: After mastering TurtleBot 3, try implementing autonomous navigation using the Navigation 2 stack. Use SLAM to map the environment, then navigate to goal positions autonomously!

Troubleshooting Common Issues

Issue 1: Gazebo Freezes or Won’t Start

- Cause: Graphics driver issues

- Solutions:

# Use software rendering LIBGL_ALWAYS_SOFTWARE=1 gazebo # Or update graphics drivers sudo ubuntu-drivers autoinstall

Issue 2: Robot Falls Through Floor

- Cause: Collision geometry missing or wrong

- Solution: Ensure every link has a <collision> element

Issue 3: Model Not Visible in Gazebo

- Cause: URDF not properly loaded

- Solution:

# Check robot_description topic ros2 topic info /robot_description ros2 topic echo /robot_description | head -20

Issue 4: Controllers Not Loading

- Cause: ros2_control plugin not configured

- Solution: Verify gazebo_ros2_control plugin is in URDF and config file path is correct

Need Help? Resources:

Next Steps: Continue Learning

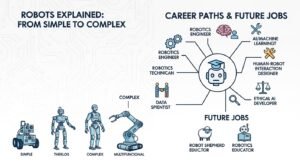

You’ve learned to simulate robots in Gazebo. Here’s where to go next:

Expand Your Skills

- Add More Sensors: IMU, GPS, force/torque sensors, depth cameras

- Multi-Robot Simulation: Simulate robot teams working together

- Custom Plugins: Write Gazebo plugins for custom hardware

- Actor Simulation: Simulate moving humans in the environment

Official Resources

YouTube Channels

- Articulated Robotics – ROS 2 and Gazebo tutorials

- The Construct Sim – Interactive robot simulation courses

- F1/10 Autonomous Racing – Advanced Gazebo applications

Conclusion: You Can Now Simulate Robots!

You’ve learned to create robot models in URDF, spawn them in Gazebo, add sensors, and control them using ROS 2. This is a crucial skill that enables rapid development without expensive hardware.

Gazebo simulation opens doors to:

- Algorithm Development: Test navigation, manipulation, and perception algorithms

- Regression Testing: Automatically test your code against simulation scenarios

- Continuous Integration: Run automated tests in simulation as part of your build pipeline

- Education: Learn robotics without access to expensive hardware

Your Challenge: Create a complete mobile robot simulation with LIDAR, autonomous navigation, and obstacle avoidance. Use the Navigation 2 stack to make your robot explore and map an unknown environment autonomously!

From here, explore autonomous navigation with Navigation 2, robot arm manipulation with MoveIt, or computer vision integration with OpenCV. The simulation skills you’ve learned apply to every robotics project.

Related Guides: Continue your learning with Autonomous Navigation with ROS 2, Introduction to Robot Kinematics, Building Your First ROS Package, and Computer Vision with ROS 2 and OpenCV.